By Art Reisman

CTO, APconnections

Over the last year or so, when the work day is done, I often find myself talking shop with several peers of mine who run wireless networking companies. These are the guys in he trenches. They spend their days installing wireless infrastructure in apartment buildings , hotels, professional sports arenas to name just a few. Below I share a few tidbits intended to provide a high level picture for anybody thinking about building their own wireless network.

There are no experts.

Why? Competition between wireless manufacturers is intense. Yes the competition is great for innovation, and certainly wireless technology has come a long way in the last 10 years; however these fast paced improvements come with a cost. New learning curves for IT partners, numerous patches, combined with differing approaches, make it hard for any one person to become an expert. Anybody that works in this industry usually settles in with one manufacturer perhaps 2, it is moving too fast .

The higher (faster) the frequency the higher the cost of the network.

Why ? As the industry moves to standards that transmit data at higher data rates, they must use higher frequencies to achieve the faster speeds. It just so happens that these higher frequencies tend to be less effective at penetrating through buildings , walls, and windows. The increase in cost comes with the need to place more and more access points in a building to achieve coverage.

Putting more access points in your building does not always mean better service.

Why? Computers have a bad habit of connecting to one access point and then not letting go, even when the signal gets weak. For example when you connect up to a wireless network with your lap top in the lobby of a hotel, and then move across the room, you can end up in a bad spot with respect to original access point connection. In theory, the right thing to do would be to release your current connection and connect to a different access point. Problem is most of the installed base of wireless networks , do not have any intelligence built in to get you routed to the best access point,hence even a building with plenty of coverage can have maddening service.

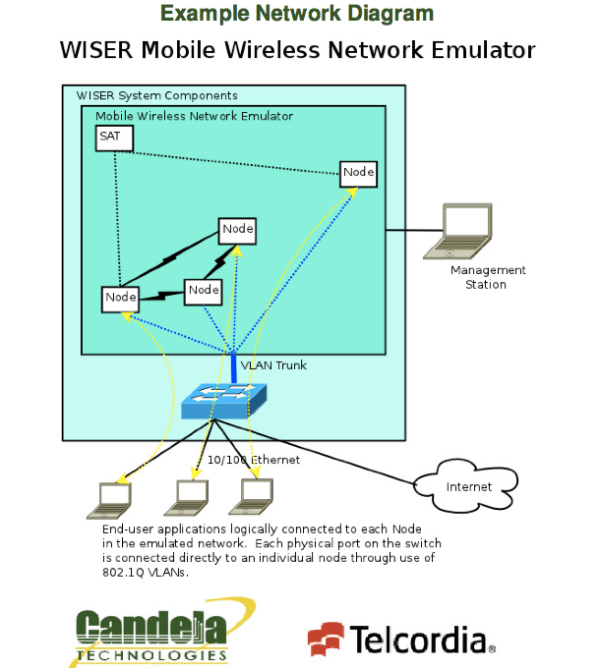

Electro Magnetic Radiation Cannot Be Seen

So What? The issue here is that there are all kinds of scenarios where the wireless signals bouncing around the environment can destroy service. Think of a highway full of invisible cars traveling in any direction they wanted. When a wireless network is installed the contractor in charge does what is called a site survey. This is involves special equipment that can measure the electro magnetic waves in an area, and helps them plan how many and where to install wireless access points ; but once installed, anything can happen. Private personal hotspots , devices with electric motors, a change in metal furniture configuration are all things that can destabilize an area, and thus service can degrade for reasons that nobody can detect.

The more people Connected the Slower their Speed

Why? Wireless access points use a technique called TDM ( Time Division Multiplexing) Basically available bandwidth is carved up into little time slots. When there is only one user connected to access point, that user gets all the bandwidth, when there are two users connected they each get half the time slots. So that access point that advertised 100 megabit speeds , can only deliver at best 10 megabits when 10 people are connected to it.

Related Article

:

:

Is a Balloon Based Internet Service a Threat to Traditional Cable and DSL?

August 7, 2013 — netequalizerUpdate:

Looks like this might be the real deal. A mystery barge in San Francisco Bay owned by Google

I recently read an article regarding Google’s foray into balloon based Internet services.

This intriguing idea sparked a discussion with some of the engineers at a major satellite internet provider on the same subject. They, as well as myself, were somewhat skeptical at the feasibility of this balloon idea. Could we be wrong? Obviously, there are some unconventional obstacles with bouncing Internet signals off balloons, but what if those obstacles could be economically overcome?

First lets look at the practicalities of using balloons to beam Internet signals from ground based stations to consumers.

Advantages over satellite service

Latency

Satellite Internet, the kind used by Wild Blue, usually comes with a minimum of a 1 second delay, sometimes more. The bulk of this signal delay is due to the distance required for a stationary satellite, 22,000 miles.

A balloon would be located much closer to the earth, in the atmosphere at around 2 to 12 miles up. The delay at this distance latency is just a few milliseconds.

Cost

Getting a basic stationary satellite into space runs at a minimum 50 million dollars, and perhaps a bit less for a low orbiting non stationary satellite.

Balloons are relatively inexpensive compared to a satellite. Although I don’t have exact numbers on a balloon, the launch cost is practically zero, a balloon carries its payload without any additional energy or infrastructure, the only real cost is the balloon, the payload, and ground based stations. For comparison purposes let’s go with 50,000 per balloon.

Power

Both options can use solar, orienting a balloon position with solar collectors might require 360 degree coverage; however as we will see a balloon can be tethered and periodically raised and lowered, in which case power can be ground based rechargeable.

Logistics

This is the elephant in the room. The position of a satellite in time is extremely predictable. Even for satellites that are not stationery, they can be relied on to be where they are supposed to be at any given time. This makes coverage planning deterministic. Balloons on the other hand, unless tethered will wonder with very little future predictability.

Coverage Range

A balloon at 10,000 feet can cover a Radius on the ground of about 70 miles. A stationary satellite can cover an entire continent. So you would need a series of balloons to cover an area reliably.

Untethered

I have to throw out the idea of untethered high altitude balloons. They would wander all over the world , and crash back to earth in random places. Even if it was cost-effective to saturate the upper atmosphere with them, and pick them out when in range for communications, I just don’t think NASA would be too excited to have 1000’s of these large balloons in unpredictable drift patterns .

Tethered

As crazy as it sounds, there is a precedent for tethering a communication balloon to a 10,000 foot cable. Evidently the US did something like this to broadcast TV signals into Cuba. I suppose for an isolated area where you can hang out offshore well out-of-the-way of any air traffic, this is possible

High Density Area Competition

So far I have been running under the assumption that the balloon based Internet service was an alternative to satellite coverage which finds its niche exclusively in rural areas of the world. When I think of the monopoly and cost advantage existing carriers have in urban areas, a wireless service with beamed high speeds from overhead might have some staying power. Certainly there could be some overlap with rural users and thus the economics of deployment become more cost-effective. The more subscribers the better. But I do not see urban coverage as a driving business factor.

Would the consumer need a directional Antenna?

I have been assuming all along that these balloons would supply direct service to the consumer. I would suspect that some sort of directional antenna pointing at your local offshore balloon would need to be attached to the side of your house. This is another reason why the balloons would need to be in a stationary position

My conclusion is that somebody, like Google, could conceivably create a balloon zone off of any coastline with a series of Balloons tethered to barges of some kind. The main problem assuming cost was not an issue, would be the political ramifications of a plane hitting one of the tethers. With Internet demand on the rise, 4g’s limited range, and the high cost of laying wires to the rural home, I would not be surprised to see a test network someplace in the near future.

Tethered Balloon ( Courtesy of Arstechnica article)

Share this: