Our sympathies go out to everyone who has been impacted by Covid 19, whether you had it personally or it affected your family and friends. I personally lost a sister to Covid-19 complications back in May; hence I take this virus very seriously.

The question I ask myself now as we see a light at the end of the Covid-19 tunnel with the anticipated vaccines next month is, how has Covid-19 changed the IT landscape for us and our customers?

The biggest change that we have seen is Increased Internet Usage.

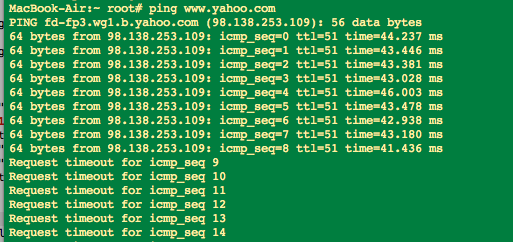

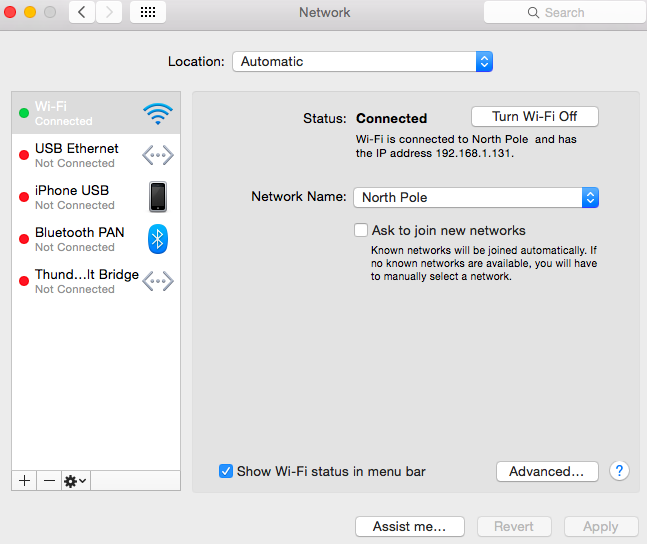

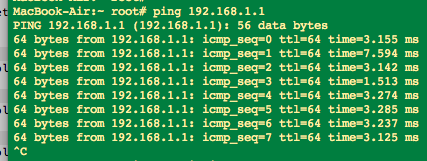

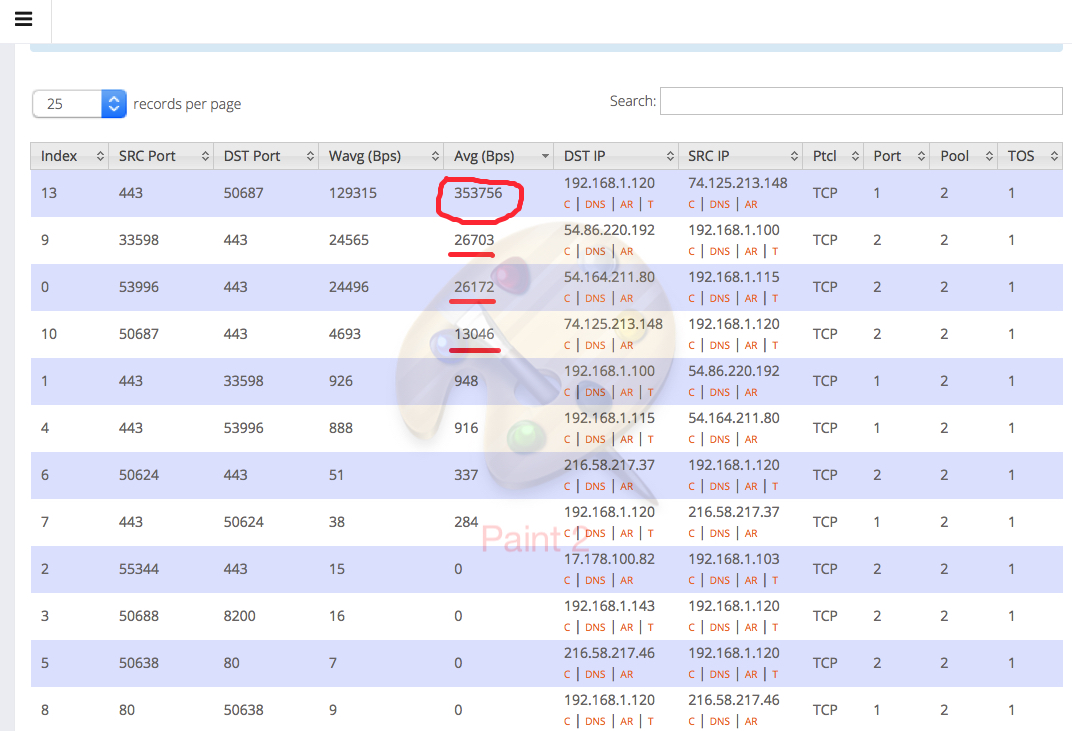

We have seen a 500 percent increase in NetEqualizer License upgrades over the past 6 months, which means that our customers are ramping up their circuits to ensure a work from home experience without interruption or outages. What we can’t tell for sure is whether or not these upgrades were more out of an abundance of caution, getting ahead of the curve, or if there was actually a significant increase in demand.

Without a doubt, home usage of Internet has increased, as consumers work from home on Zoom calls, watch more movies, and find ways to entertain themselves in a world where they are staying at home most of the time. Did this shift actually put more traffic on the average business office network where our bandwidth controllers normally reside? The knee jerk reaction would be yes of course, but I would argue not so fast. Let me lay out my logic here…

For one, with a group of people working remotely using the plethora of cloud-hosted collaboration applications such as Zoom, or Blackboard sharing, there is very little if any extra bandwidth burden back at the home office or campus. The additional cloud-based traffic from remote users will be pushed onto their residential ISP providers. On the other hand, organizations that did not transition services to the cloud will have their hands full handling the traffic from home users coming in over VPN into the office.

Higher Education usage is a slightly different animal. Let’s explore the three different cases as I see them for Higher Education.

#1) Everybody is Remote

In this instance it is highly unlikely there would be any increase in bandwidth usage at the campus itself. All of the Zoom or Microsoft Teams traffic would be shifted to the ISPs at the residences of students and teachers.

2) Teachers are On-Site and Students are Remote

For this we can do an approximation.

For each teacher sharing a room session you can estimate 2 to 8 megabits of consistent bandwidth load. Take a high school with 40 teachers on active Zoom calls, you could estimate a sustained 300 megabits dedicated to Zoom. With just a skeleton crew of teachers and no students in the building the Internet Capacity should hold as the students tend to eat up huge chunks of bandwidth which is no longer the case.

3) Mixed Remote and In-person Students

The one scenario that would stress existing infrastructure would be the case where students are on campus while at the same time classes are being broadcast remotely for the students who are unable to come to class in person. In this instance, you have close to the normal campus load plus all the Zoom or Microsoft Teams sessions emanating from the classrooms. To top it off these Zoom or Microsoft Team sessions are highly sensitive to latency and thus the institution cannot risk even a small amount of congestion as that would cause an interruption to all classes.

Prior to Covid-19, Internet congestion might interrupt a Skype conference call with the sales team to Europe, which is no laughing matter but a survivable disruption. Post Covid-19, an interruption in Internet communcation could potentially interrupt the entire organization, which is not tolerable.

In summary, it was probably wise for most institutions to beef up their IT infrastructure to handle more bandwidth. Even knowing in hindsight that in some cases, it may have not been needed on the campus or the office. Given the absolutely essential nature that Internet communication has played to keep Businesses and Higher Ed connected, it was not worth the risk of being caught with too little.

Stay tuned for a future article detailing the impact of Covid-19 on ISPs…

Out of the Box Ideas for Security

February 19, 2021 — netequalizerI woke up this morning thinking about the IT industry and its shift from building infrastructure to an industry where everybody is tasked with security, a necessary evil that sucks the life out of companies that could be using their resources for revenue-generating projects. Every new grad I meet is getting their 1st job at one of many companies that provide various security services. From bank fraud investigation, white-night hacking, to security auditing, there must be 10’s if not 100’s of billions dollars being spent on these endeavors. Talk about a tax burden on society! The amount of money being spent on security and equipment is the real extortion, and there is no end in site.

The good news is , I have a few ideas that might help slow down this plague.

Immerse Your Real Data in Fake Data

Ever hear of the bank that keeps an exploding dye bag that they give to people who rob them? Why not apply the same concept to data. Create large fictitious databases and embed them within your real data. Obviously you will need a way internally to ignore the fake data and separate it from real data. Assume for a minute that this issue is easily differentiated by your internal systems. The fictitious financial data could then be traced when unscrupulous hacks try to use it. Worst case, it would create a waste of time for them.

Assuming the stolen data is sold on the dark web, their dark web customers are not going to be happy when they find out the data does not yield any nefarious benefits. The best case is this would also leave a trail for the good guys to figure out who stole the data just by monitoring these fictitious accounts. For example, John James Macintosh, Age 27, of Colby, Kansas does not exist, but his bank account does, and if somebody tried to access it you would instantly know to set a trap of some kind to locate the person accessing the account (if possible).

The same techniques are used in counter intelligence to root out traitors and spies. Carefully planted fake information is dispersed as classified, and by careful forensics security agencies can find the leaker (spy).

Keep the Scammers on the Phone

For spam and phone scams you can also put an end to those, with perhaps a few AI agents working on your behalf. Train these AI entities to respond to all spam and phone scams like an actual human. Have them respond to every obnoxious spam email, and engage any phone scammer with the appropriate responses to keep them on the phone.

These scams only persist because there are just enough little old ladies and just enough people who wishfully open spyware etc. The phone scammers that call me operate in a world where only their actual target people press “1” to hear about their auto warranty options. My guess is 99.9 percent of the people who get these calls hang up instantly or don’t pick up at all. This behavior actually is a benefit to the scammer, as it makes their operation more efficient. Think about it, they only want to spend their phone time & energy on potential victims.

There is an old saying in the sales world that a quick “no” from a contact is far better than spending an hour of your time on a dead end sale. But suppose the AI agents picked up every time and strung the scammer out. This would quickly become a very inefficient business for the scammer. Not to mention computing time is very inexpensive and AI technology is becoming standard. If everybody’s computer/iPhone in the world came with an AI application that would respond to all your nefarious emails and phone scams on your behalf, the scammers would give up at some point.

Those are my favorite two ideas security ideas for now. Let me know if you like either of these, or if you have any of your own out-of-the-box security ideas.

Share this: