LAFAYETTE, Colo.–(BUSINESS WIRE)–APconnections, an innovation-driven technology company that delivers best-in-class network traffic management appliances, and Global Gossip, a leader in network managed services for the lodging industry, today announced the joint Hotel Management System Integrated Offering (HMSIO).

“Working with APconnections on this joint solution offers tremendous potential. Since the integration of NetEqualizer into our head-end stack we have been able to offer a much improved end user Wi-Fi experience and overall greater customer satisfaction.”

Sam Beskur

Director of U.S. Operations

Global Gossip

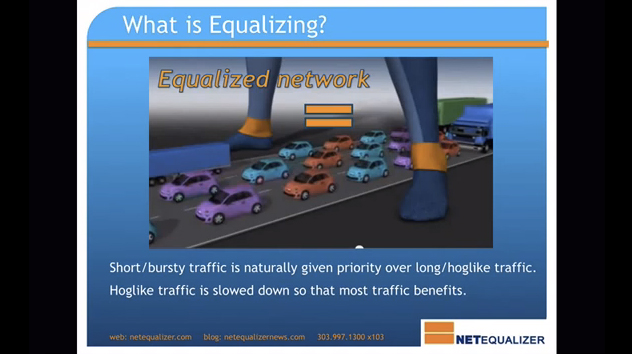

The joint offering combines the strengths of the NetEqualizer behavior-based bandwidth shaping appliance, with Global Gossip’s world-class managed network services offering. HMSIO will offer hotel and lodging customers a full suite of capabilities to manage their wireless networks, including customized authentication, behavior-based bandwidth shaping, 24/7/365 support, a cloud-based monitoring portal, and network design services. With HMSIO, hospitality and lodging customers can provide a “low noise”, high-quality, wireless Internet experience to guests along with unmatched excellence in customer support. Learn more in our HMSIO Data Sheet.

Global Gossip’s Director of U.S. Operations, Sam Beskur, says, “Working with APconnections on this joint solution offers tremendous potential. Since the integration of NetEqualizer into our head-end stack we have been able to offer a much improved end user Wi-Fi experience and overall greater customer satisfaction.”

APconnections’ CEO, Art Reisman, stated, “We have been looking for the right partner to offer an end-to-end network solution to our lodging industry customers. With their worldwide footprint and excellent technical support, Global Gossip’s network services are a great complement to our NetEqualizer bandwidth shaping products.”

About Global Gossip

Global Gossip (http://hsia.globalgossip.com) has been developing network and communication solutions since 1999 and currently manages and maintains over three hundred wired and wireless access networks globally. Our service locations span seven countries and include locations as remote and bandwidth challenged as the central Australian desert to high throughput networks in downtown London, England. Global Gossip has offices in Denver, Colorado; Sydney, Australia; and London, England.

About APconnections

APconnections is a privately held company founded in 2003 and is based in Lafayette, Colorado, USA (http://netequalizer.com). Our flexible and scalable network traffic management solutions can be found at thousands of customer sites in public and private organizations of all sizes across the globe, including: Fortune 500 companies, major universities, K-12 schools, Internet providers, libraries, and government agencies on six continents.

Does your ISP restrict you from the public Internet?

April 14, 2013 — netequalizerBy Art Reisman

The term, walled off Garden, is the practice of a service provider locking you into their local content. A classic example of the walled off garden was exemplified by the early years of AOL. Originally when using their dial-up service, AOL provided all the content you could want. Access to the actual internet was granted by AOL only after other dial-up Internet providers started to compete with their closed offerings. Today, using much more subtle techniques, Internet providers try to keep you on their networks. The reason is simple, it costs them money to transfer you across a boundary to another network, and thus, it is in their economic interest to keep you within their network.

So how do Internet service providers keep you on their network?

1) Sometimes with monetary incentives , for example, with large commercial accounts they just tell you it is going to cost more. My experience with this practice are first hand. I have heard testimonial from many of our customers running ISPs, mostly outside the US , where they are sold a chunk of bulk bandwidth with conditions. The Terms are often something on the order of:

obviously there is going to be a trickle down effect where the regional ISP is going to try to discourage usage outside of the local country under such terms.

2) Then there are more passive techniques such as blatantly looking at your private traffic and just not letting off their network. This technique was used in the US, implemented by large service providers back in the mid 2000’s. Basically they targeted peer-to-peer requests and made sure you did not leave their network. Essentially you would only find content from other users within your providers network, even though it would appear as though you were searching the entire Internet. Special equipment was used to intercept your requests and only allow to you probe other users within your providers network thus saving them money by avoiding Internet Exchange fees.

3) Another way your provider will try to keep you on their network is offer local mirrored content. Basically they keep a copy of common files at a central location . In most cases this actually causes the user no harm as they still get the same content. But it can cause problems if not done correctly, they risk sending out old data or obsolete news stories that have been updates.

4) Lastly some governments just outright block content, but this is for mostly political reasons.

Editors Note: There are also political reasons to control where you go on the Internet Practiced in China and Iran

Related Article Aol folds original content operations

Related Article: Why Caching alone won’t speed up your Internet

Share this: