|

We hope you enjoy this month’s NetEqualizer Newsletter. Highlights include features from Release 8.4, our 2016 Leasing Program, and a presentation highlighting the NetEqualizer at the 2016 ASCUE Conference.

|

March 2016

|

| Release 8.4 is almost here! |

|

Greetings! Enjoy another issue of NetEqualizer News.

I write this today in the midst of a spring blizzard in Colorado. So far it appears that I have at least 15 inches of snow and drifts up to three feet outside my house, while it continues to blow more snow in at 35 miles an hour. Just another typical March day in Colorado! I was hoping to talk about spring in this newsletter, but now it seems far away. This month we are talking about our upcoming release, slated for May, which features a lot of cool Usability Enhancements. Read below to learn more. We also continue our discussion on how the NetEqualizer is Cloud-Ready, as all things Cloud continues to be top-of-mind for all of us. We are excited to announce that we will be represented at the ASCUE Conference in June. Join Young Harris College at their talk featuring the NetEqualizer. And finally, we share more news about our 2016 Leasing Program, and how we are keeping bandwidth shaping affordable. And remember we are now on Twitter! You can now follow us @NetEqualizer. We love it when we hear back from you – so if you have a story you would like to share with us of how we have helped you, let us know. Email me directly at art@apconnections.net. I would love to hear from you! – Art Reisman (CTO) |

| In this issue:

:: NetEqualizer Release 8.4 – Enhanced Usability – Is Almost Ready! |

| NetEqualizer Release 8.4 – Enhanced Usability – Is Almost Ready! |

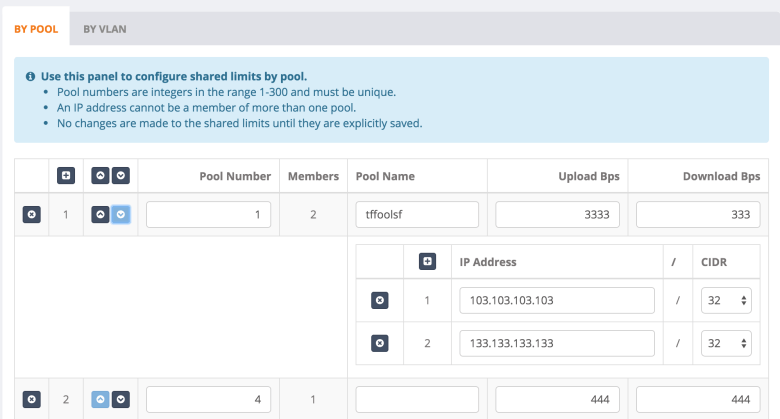

| A Complete GUI Redesign! We recently had the chance to kick the tires on our new 8.4 Release interface. It really has some significant wow factor type features. In hindsight, perhaps we should have called this NetEqualizer 9.0 and not just lowly 8.4. We have been talking about this release as a GUI Redesign & Pool Enhancements, but I really think 8.4 is a release full of Usability Enhancements, that will make it easier to manage and configure your NetEqualizer.The biggest changes center on the the regular NetEqualizer GUI. We have transitioned everything to share the same look and feel as RTR. Here are some of the pages and features we are most excited about!1) Edit traffic limits on the fly without having to add/remove them one at a time! The screenshot below shows the Pool/VLAN shared limit interface. You can see the Pools, their names, and their associated members.  2) We added a cool new dashboard that serves as the homepage for NetEqualizer management (license key information blocked out in grey): 2) We added a cool new dashboard that serves as the homepage for NetEqualizer management (license key information blocked out in grey): 3) The new GUI also has an easy way to set the time and pick a timezone – no more logging in to the NetEqualizer terminal! 3) The new GUI also has an easy way to set the time and pick a timezone – no more logging in to the NetEqualizer terminal! 4) You can now choose your units for the entire interface! This includes units for the configuration and RTR. 4) You can now choose your units for the entire interface! This includes units for the configuration and RTR. Check back next month for an update on more exciting changes planned for 8.4!Our time frame for General Acceptance of this release is May of 2016.As with all software releases, the 8.4 Release will be free to all customers with valid NetEqualizer Software and Support (NSS). Check back next month for an update on more exciting changes planned for 8.4!Our time frame for General Acceptance of this release is May of 2016.As with all software releases, the 8.4 Release will be free to all customers with valid NetEqualizer Software and Support (NSS). |

| Keeping Bandwidth Shaping Affordable |

| NetEqualizer Leasing Program

At APconnections, we are proud of our reputation for offering affordable bandwidth shaping solutions. In the summer of 2013, we decided that we could help our customers that need to better align costs with recurring revenue, by offering a Leasing Program. We are happy to announce that we have enhanced our lease offerings in 2016. Our “Standard” lease now comes with a 1Gbps license, and leases for $500 per month. Adding 1Gbps fiber at any of our lease levels just bumps up the price by $100 per month. And for those needing maximum performance, we now also give you access to an Enterprise-class NE4000 with our 5Gbps license and 10Gbps fiber. If leasing is of interest to you, and you would like to learn more, you can view our Leasing Program agreement here. Please note that the NetEqualizer Leasing Program is generally available to customers in the United States and Canada. If you are outside of these countries, contact us to see if leasing is available in your area. |

| Join a Presentation on NetEqualizer at ASCUE in June 2016 |

| Association Supporting Computer Users in Education

We are excited to announce that one of our long-time customers, Hollis Townsend, Director of Technology Support and Operations at Young Harris College, will be talking about his experience with the NetEqualizer in his talk at ASCUE, June 12-16, 2016 in Myrtle Beach, South Carolina. Young Harris has been using NetEqualizer to solve their network congestion issues since July, 2007. They have upgraded their NetEQ as their network has grown over the years, and currently run an NE3000 with a 1Gbps license. We are also happy to announce that APconnections, home of the NetEqualizer, will be a Silver Sponsor at the ASCUE Conference. We will be giving away a great door prize – a Fitbit fitness watch! If you use technology in higher education, you may want to consider attending ASCUE this June. And if you have ever wanted to talk to a colleague about their experience with the NetEqualizer, please join Hollis’ presentation. His presentation is tentatively titled “Shaping Bandwidth – Learning to Love Netflix on Campus”. ASCUE is the Association Supporting Computer Users in Education and they have been around since 1968. Members hail from all over North America. ASCUE’s mission is to provide opportunities for resource-sharing, networking, and collaboration within an environment that fosters creativity and innovation in the use of technology within higher education. Click here to learn more about ASCUE or register for the June conference. |

| Six Ways to Save with Cloud Computing |

| NetEqualizer Looks to the Clouds

We are continuing our focus on the cloud for NetEqualizer. The NetEqualizer is now cloud ready – as we’ve written about in previous newsletters. There are a lot of benefits to using the cloud in general. Here are just a few: 1) Fully utilized hardware The last one, lower network costs, is interesting. Since your business services are in the cloud, you can ditch all of those expensive MPLS links that you use to privately tie your offices to your back-end systems, and replace them with lower-cost commercial Internet links. You do not really need more bandwidth, just better bandwidth performance. The commodity Internet links are likely good enough, but when you move to the Cloud, you will need a smart bandwidth shaper. Your link to the Internet becomes even more critical when you go the Cloud. But that does not mean bigger and more expensive pipes. Cloud applications are very lean and you do not need a big pipe to support them. You just need to make sure recreational traffic does not cut into your business application traffic. The NetEqualizer fits perfectly as the bandwidth shaping product in the above infrastructure. Let us know if you have any questions about the cloud-ready NetEqualizer! |

| Best Of Blog |

| How to Build Your Own Speed Test Tool

By Art Reisman – CTO – APconnections Editor’s Note: We often get asked to “prove” the NetEqualizer is making a difference regarding end user experience. The tool description and method outlined in our blog post, can be used to objectively justify the NetEqualizer value. Let us know if you need any help setting it up. Most speed test sites measure the download speed of a large file from a server to your computer. There are two potential problems with using this metric. 1) ISPs can design their networks so these tests show best case results. A better test of your perceived speed is how long it takes to load up a new web page… |

|

Photo Of The Month

Balloon

Have you ever wondered what happens to balloons when they are released into the sky? The remnants of this balloon landed right in front of a staff member on a clear day while hiking Black Star Canyon in Orange County, CA. Balloons like this are actually an environmental disaster as they often end up in oceans and are eaten by sea and wildlife.

|

|

Cloud Computing – Do You Have Enough Bandwidth? And a Few Other Things to Consider

December 10, 2011 — netequalizerThe following is a list of things to consider when using a cloud-computing model.

Bandwidth: Is your link fast enough to support cloud computing?

We get asked this question all the time: What is the best-practice standard for bandwidth allocation?

Well, the answer depends on what you are computing.

– First, there is the application itself. Is your application dynamically loading up modules every time you click on a new screen? If the application is designed correctly, it will be lightweight and come up quickly in your browser. Flash video screens certainly spruce up the experience, but I hate waiting for them. Make sure when you go to a cloud model that your application is adapted for limited bandwidth.

– Second, what type of transactions are you running? Are you running videos and large graphics or just data? Are you doing photo processing from Kodak? If so, you are not typical, and moving images up and down your link will be your constraining factor.

– Third, are you sharing general Internet access with your cloud link? In other words, is that guy on his lunch break watching a replay of royal wedding bloopers on YouTube interfering with your salesforce.com access?

The good news is (assuming you will be running a transactional cloud computing environment – e.g. accounting, sales database, basic email, attendance, medical records – without video clips or large data files), you most likely will not need additional Internet bandwidth. Obviously, we assume your business has reasonable Internet response times prior to transitioning to a cloud application.

Factoid: Typically, for a business in an urban area, we would expect about 10 megabits of bandwidth for every 100 employees. If you fall below this ratio, 10/100, you can still take advantage of cloud computing but you may need some form of QoS device to prevent the recreational or non-essential Internet access from interfering with your cloud applications. See our article on contention ratio for more information.

Security: Can you trust your data in the cloud?

For the most part, chances are your cloud partner will have much better resources to deal with security than your enterprise, as this should be a primary function of their business. They should have an economy of scale – whereas most companies view security as a cost and are always juggling those costs against profits, cloud-computing providers will view security as an asset and invest more heavily.

We addressed security in detail in our article how secure is the cloud, but here are some of the main points to consider:

1) Transit security: moving data to and from your cloud provider. How are you going to make sure this is secure?

2) Storage: handling of your data at your cloud provider, is it secure once it gets there from an outside hacker?

3) Inside job: this is often overlooked, but can be a huge security risk. Who has access to your data within the provider network?

Evaluating security when choosing your provider.

You would assume the cloud company, whether it be Apple or Google (Gmail, Google Calendar), uses some best practices to ensure security. My fear is that ultimately some major cloud provider will fail miserably just like banks and brokerage firms. Over time, one or more of them will become complacent. Here is my check list on what I would want in my trusted cloud computing partner:

1) Do they have redundancy in their facilities and their access?

2) Do they screen their employees for criminal records and drug usage?

3) Are they willing to let you, or a truly independent auditor, into their facility?

4) How often do they back-up data and how do they test recovery?

Big Brother is watching.

This is not so much a traditional security threat, but if you are using a free service you are likely going to agree, somewhere in their fine print, to expose some of your information for marketing purposes. Ever wonder how those targeted ads appear that are relevant to the content of the mail you are reading?

Link reliability.

What happens if your link goes down or your provider link goes down, how dependent are you? Make sure your business or application can handle unexpected downtime.

Editors note: unless otherwise stated, these tips assume you are using a third-party provider for resources applications and are not a large enterprise with a centralized service on your Internet. For example, using QuickBooks over the Internet would be considered a cloud application (and one that I use extensively in our business), however, centralizing Microsoft excel on a corporate server with thin terminal clients would not be cloud computing.

Share this: