By Art Reisman

Updated September 2012

Updated Jan 2013

Art Reisman is currently CTO and co-founder of APconnections, creator of the NetEqualizer. He has worked at several start-up companies over the years and has invented and brought several technology products to market, both on his own and with the backing of larger corporations. This includes tools for the automotive industry.

The following post will serve as a running list of various ideas as I think of them.

The reason I’m sharing them is simply that I hate to let an idea go to waste. Notice that I did not say a good idea. An idea cannot be judged until you make an attempt to develop it further, which I have not done in most cases.

Note: I cannot ensure exclusive rights or ownership for the development of any of these ideas.

1) A Real, Unbiased, Cell Phone Coverage Map

We all know those spots on the interstate and parts of town where our cell phone coverage is worthless. If you could publish an easy-to-use, widely-accepted and maintained guide to these areas, it would become a very popular site.

Research: From my brief search on the subject, a consumer trade rag called CNET has done some work in this area, but I could only find their demos and press releases. I kept getting a map of the Seattle area with no obvious way to get a broader map search.

2) Commodity Land Trading Site

If you have ever flown over the Great Plains you have noticed a gigantic, undeveloped sea of crop and grass land. It is very hard to invest in these tracts for anything less than 1000 acres. Unlike commercial and residential real estate, land prices are fairly easy to quantify, and the simplicity of land allows most of these tracts to be sold at auction. Larger portfolio managers and partnerships snap them up in the same way they would invest in a Mutual Fund. The idea is to place a large portion of farm land into a fund that can easily trade in fractional shares – each representing a real, tangible share of the land.

Research: There is a farm production site with a similar model already.

3) Visit Wineries From all 50 U.S. States at One Location

The idea here is to have one themed retail outlet where you can buy wines from all 50 states with each state given an equal share of floor space. Wines would be set up in themed booths from each state’s wine-producing area, with history and background literature also available. Wines would be from unique, boutique-type wineries and perhaps a few dollars more than the list price. In other words, this store would be more of a themed destination near a major interstate or tourist hub. Every state in the county has wineries, and most have wine growing areas.

Research: Article on wines from all 50 states.

4) Reclaimed Barn Wood

At one time the homesteads on the Great Plains numbered one per approximately 160 acres. Now there is about one family farm per several-thousand acres. As families have consolidated, all that remains are numerous, small, weathered barns and sheds. I would imagine the demand for this reclaimed wood would be on the East Coast and West Coast. There is a company that specializes in reclaimed barn wood, however I suspect the market has room for another player.

I would imagine the demand for this reclaimed wood would be on the East Coast and West Coast. There is a company that specializes in reclaimed barn wood, however I suspect the market has room for another player.

5) Site Dedicated to Debunking Dead-end Technologies

Often over the span of an Engineer’s career, they are forced to work on technologies that are politically based, and just down-right impractical or stupid. Once there is money or political pressure behind them, finding opposing views is hard to do. However, for investors or companies betting the house on them, an unbiased opinion from somebody with a brain would have great value, especially if such data could avert billions of dollars of wasted investment and time on technologies destined to fail. A couple of examples of over hyped technologies that drove product decisions are:

VXML

Artificial Intelligence

Voice Recognition

This is not to say there was not some merit in these technologies, but they had some basic flaws that have made them fall far short of their promises. These short falls were easily understood by many engineers working on them, but once the promises were sold to investors, the short comings were shoved under a rug.

6) Find Me a Human

I searched the other day for a tool like this and so far have come up empty.

Take your phone call to a corporation or government agency, and call you back when it had a human on the line. The “how” does not matter to the end user here, but it would involve the reverse engineering of corporate call trees in order to navigate them for you.

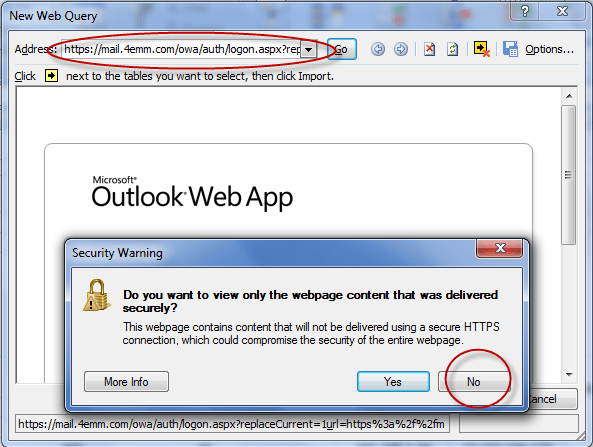

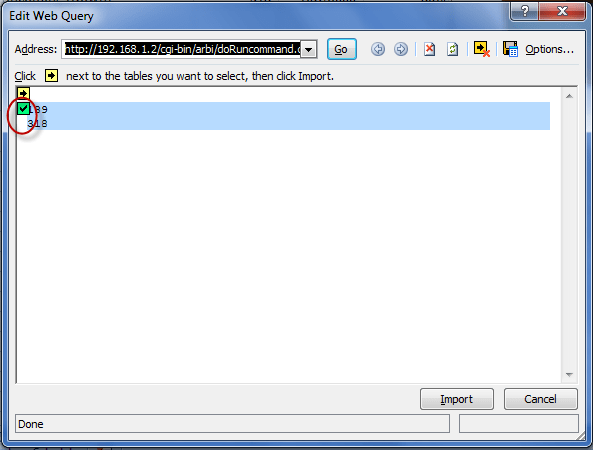

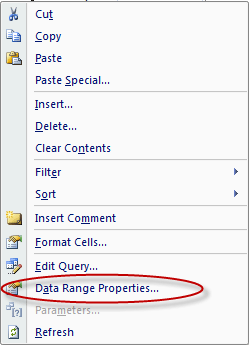

7) A Natural Speed Test Tool for Corporations and Users with Higher-end Connections

Most speed tests are initiated by the user at a specific time, usually when they suspect their Internet is slow. But what if you have a busy corporate Internet connection? In this case, you might have hundreds of users on the link at one time, and running a speed test is not likely practical for a couple of reasons:

1) Speed tests usually run short duration files. For example, a 10 megabit file on a 100 megabit link would complete in 0.1 seconds, and perhaps correctly report the link speed to the operator, but this test would be irrelevant when compared to the same link’s performance with 1000 users downloading files all day long.

2) Speed tests might be able to test line speed to your nearest pop, but almost all public speed test sites are designed for consumers sending relatively short files to nearby local servers.

The good news is we have this in beta with our NetEqualizer product.

8) Web Search Engine for Faces or Images

You seed the search engine with an image or picture and it will scour the web looking for similar people. Perhaps something that could be used in crime fighting? I suspect something like this already exists but not at a consumer level.

Research: Tineye is trying to accomplish this feat at a consumer level.

9) A Search Engine that Really Finds What You are Looking For

When I first started using the Web, it seemed that all my searches found relevant content. Looking back, almost all the original content on the Web was academic. Academia and government predated any commercial use of the Web. Today, it seems like you can’t find anything non-commercial, and I suspect the reason is that commercial content simply overwhelms the system. Perhaps this Web search engine would filter all commercial content.

For example, last night I was looking for a free radio station that plays content similar to Sirius Satellite Radio’s “Deep Tracks.” I have this station in my car, but I really did not want to update my subscription to listen to radio on the Internet as there are 1000’s of free radio stations. My searches kept coming up with the same commercial crap and I had to weed through it, spending almost an hour trying to decipher it. Whenever I did find a station that claimed to play Deep Tracks, they didn’t as a format. They were all local stations with the same exact top 100 classic rock songs over and over. What got me going is that I know there is some freak out there with a Deep-Tracks-like play list. However, instead of finding that person, I am relegated to researching the old-fashioned way – human-to-human through forums and blogs – as the Web search engines cannot understand my context.

10) Insect Biomass in Pet Food

We had a very bad grasshopper outbreak in our yard this year. The little buggers eventually moved into the garden and chewed up the pumpkin plants and the tassels on the corn plants. Rather than use insecticides and try to destroy them, there must be a commercial use for them. Perhaps if you could attract them in large numbers into a trap and grind them into a high protein dog food there might be a market for them? They are free and abundant in most grassy areas, so the main cost would be in collection, transport, processing and marketing. I like this idea.

11) Buffalo Gourd Oil and by products

This little gourd is the toughest most drought resistant plant I have ever seen. The only problem with it is that the pulp is bitter. It may be the most bitter substance known to man kind. I should know I tried it. All the data on it claims there is nothing toxic to it, and I am pretty sure the cows that roam our pasture eat them, eating the gourds and leaving the plant.

So where is the commercial value ?

If you can figure out a process to efficiently separate the seeds from the pulp, the oil when pressed is delightfully sweet. I spent about 2 hours cleaning seeds and then ran a cup full through my manual seed press, the oil was very tasty.

Why bother with Buffalo Gourd ?

Well unlike other dry land crops grown in the western great plains such as corn , and sunflower seeds

1) the Buffalo gourd puts down a tap root as a perennial and finds deep water sources.

2) It grows well in the bottom lands and hill sides where it can find deep ground water places that most farmers have no use for with their cultivated drops

3) thrives when other plants are withering in drought quite easily.

4)It also grows back in the same spot without reseeding.

5) seed oil is delicious

6) I am guessing the rest of the plant can be used as an insecticide or mosquito repellent, going to try it.

The technical issues with this plant are

1) Harvesting in mass, may need to be hand picked.

2) Drying and separating the seed from the pulp.

12) A real holloween town, not just a fancy pumpkin patch

This idea just won’t go away , the basic premise would be to create a real neighbor hood in a real midwestern town where it is always Halloween. I am not sure of the economics. Here is what I have flushed out so far.

-Small town with older houses within 45 minutes of a population center

-Purchase 4 to 6 older larger homes on a residential block

-work with city to get some sort of exemption or special use business license

-Refurb the exteriors in holloween colors and trim

-town should be a liberal arts college with a strong theatre department, hire 20 or so students ,give them free rent in the houses

and have them rotate through shifts as holloween characters

-have characters always on shift, the idea is that it is always a holloween town not a park that opens or closes

-no charge for roaming the streets but there would be a charge for house tours, houses would have various special effects and so would the back yards

Other Related Articles:

Technology Predictions for 2012

Practical and Inspirational Tips on Bootstrapping

Building a Software Company from Scratch

Commentary: Is IPv6 Heading Toward a Walled-Off Garden?

September 25, 2011 — netequalizerIn a recent post we highlighted some of the media coverage regarding the imminent demise of the IPv4 address space. Subsequently, during a moment of introspection, I realized there is another angle to the story. I first assumed that some of the lobbying for IPv6 was a hardware-vendor-driven phenomenon; but there seems to be another aspect to the momentum of Ipv6. In talking to customers over the past year, I learned they were already buying routers that were IPv6 ready, but there was no real rush. If you look at a traditional router’s sales numbers over the past couple years, you won’t find anything earth shattering. There is no hockey-stick curve to replace older equipment. Most of the IPv6 hardware sales were done in conjunction with normal upgrade time lines.

The hype had to have another motive, and then it hit me. Could it be that the push to IPv6 is a back-door opportunity for a walled-off garden? A collaboration between large ISPs, a few large content providers, and mobile device suppliers?

Although the initial world of IPv6 day offered no special content, I predict some future IPv6 day will have the incentive of extra content. The extra content will be a treat for those consumers with IPv6-ready devices.

The wheels for a closed off Internet are already in place. Take for example all the specialized apps for the iPhone and iPad. Why can’t vendors just write generic apps like they do for a regular browser? Proprietary offerings often get stumbled into. There are very valid reasons for specialized apps for the iPhone, and no evil intent on the part of Apple, but it is inevitable that as their market share of mobile devices rises, vendors will cease to write generic apps for general web browsers.

I don’t contend that anybody will deliberately conspire to create an exclusively IPv6 club with special content; but I will go so far as to say in the fight for market share, product managers know a good thing when they see it. If you can differentiate content and access on IPv6, you have an end run around on the competition.

To envision how a walled garden might play out on IPv6, you must first understand that it is going to be very hard to switch the world over to IPv6 and it will take a long time – there seems to be agreement on that. But at the same time, a small number of companies control a majority of the access to the Internet and another small set of companies control a huge swatch of the content.

Much in the same way Apple is obsoleting the generic web browser with their apps, a small set of vendors and providers could obsolete IPv4 with new content and new access.

Share this: