By Art Reisman – CTO – www.netequalizer.com

This morning, just for fun, I decided to isolate the latency on a route from my home office, to a computer located at a remote hunting lodge. The hunting lodge is serviced by a Wild Blue satellite link.

What causes latency?

The factors that influence network latency are:

1) Wire transport speed.

Not to be confused with the amount of data a wire carry in a second, I am referring to the raw speed at which data travels on a wire. Once on the wire, the traversal time from end to end. For the most part, we can assume data travels at the speed of light: 186,000 miles per second.

2) Distance.

How far is the data traveling. Even though data travels at the speed of light, a hop across the United States will cost you about 4 milliseconds, and a hop up to a stationary satellite ( round trip about 44,000 miles) adds a minimum of 300 milliseconds. I have worked through an example of how you can trace latency across a satellite link below.

3) Number of hops.

How many switching points are there between source and destination? Each hop requires the data to move from one wire to another, and this requires a small amount of waiting to get on the next wire. Each hop can be an additional 2 or 3 milliseconds.

4) Overhead processing on a hop.

This can also add up, sometimes at the end points points, people like to look at the data, usually for security reasons, on their firewall. Depending on the number of features and processing power of the firewall this can also add a wide range of latency. Normal is from 1 or 2 milliseconds, but that can blow up to 50 milliseconds or in some cases even more when you turn on too many features on your firewall.

How much latency is too much?

It really depends on what you are doing. If it is a one way conversation, like you are watching a Netflix movie, you are probably not going to care if the data is arriving a half second after it was sent, but if you are talking interactively on a Skype call, you will find your self talking over the other person quite often – especially at the beginning of a call.

Tracing Latency across a satellite link.

Note: I am doing this all from the command line on my Mac.

Step one: I have the IP address of a computer that I know is only accessible by Satellite. So first I run a command called trace route to find all the hops along the route.

localhost:~ root# traceroute 75.104.xxx.xxx

When I run this command I get a list of every hop along the route, I also get some millisecond times for each hop from trace route but I am not sure if I trust them, so I am not showing them.

From my Mac command line I do:

traceroute to 75.104.xxx.xxx (75.104.xxx.xxx)

1 192.168.1.1 (192.168.1.1)- This is my local router or gateway the first hop

2 95.145.80.1 (95.145.80.1) – This is the Comcast Router , the first router upstream from my house at the local Comcast NOC most likely.

3 te-8-1-ur01.boulder.co.denver.comcast.net (68.85.107.85) – We then go through a bunch of Comcast links

4 te-7-4-ur02.boulder.co.denver.comcast.net (68.86.103.122)

5 te-0-10-0-10-ar02.aurora.co.denver.comcast.net (68.86.179.97)

6 he-3-10-0-0-cr01.denver.co.ibone.comcast.net (68.86.92.25)

7 xe-5-0-2-0-pe01.910fifteenth.co.ibone.comcast.net (68.86.82.202)

8 173.167.58.162 (173.167.58.162) – and then we leave the Comcast network of routers here

9 if-1-1-2-0.tcore1.pdi-paloalto.as6453.net (66.198.127.85) – and finally to some other back bone router

10 66.198.127.94 (66.198.127.94)

11 * * *

13 75.104.xxx.xxx ( This IP is on the other side of a Satellite link)

Now here is the cool part, I am going to ping the last IP address before the route goes up to the satellite, and then the hop after that to see what the latency over the satellite hop is.

Note the physical satellite does not have an IP, there is a router here on Earth that transmits data up and over the satellite link.

localhost:~ root# ping 66.198.127.94

PING 66.198.127.94 (66.198.127.94): 56 data bytes

64 bytes from 66.198.127.94: icmp_seq=0 ttl=56 time=42.476 ms

64 bytes from 66.198.127.94: icmp_seq=1 ttl=56 time=55.878 ms

64 bytes from 66.198.127.94: icmp_seq=2 ttl=56 time=42.382 ms

About 50 milliseconds.

And the last hop to the remote computer.

localhost:~ root# ping 75.104.xxx.xxx

PING 75.104.180.156 (75.104.xxx.xxx): 56 data bytes

Request timeout for icmp_seq 0

64 bytes from 75.104.180.xxx: icmp_seq=0 ttl=109 time=1551.310 ms

64 bytes from 75.104.180.xxx: icmp_seq=1 ttl=109 time=1574.177 ms

64 bytes from 75.104.180.xxx: icmp_seq=2 ttl=109 time=1494.628 ms

Wow that hop up over the satellite link added about 1500 milliseconds to my ping time!

That is a little more latency than I would have expected, but in fairness to Wild Blue they do a good job at a reasonable price. The funny thing is streaming audio works fine over the Satellite link because it is not latency sensitive. However a skype call might be a bit more painful , 300 milliseconds is about the tolerance level where users start to notice latency on a phone call, 500 is manageable, and up over 1000, starts to require a little planning and pausing before and after you speak.

References. A non technical guide to fixing TCP/IP problems

Is the Reseller Channel for Network Equipment Declining?

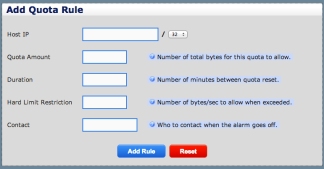

March 7, 2013 — netequalizerBack in 2008, TMCnet posed an interesting question about traditional PBX vendors. Has VOIP outgrown traditional business service channels? And that got me wondering, what is going on in the traditional network equipment channel? Is it starting to erode in favor of direct sales?

We are seeing a split in buying patterns.

1) Companies that do not have an in house staff generally make their equipment purchases based on the advice of their Network Consultants, VARs or local reseller.

The line between Network Consultants and VARs has always been a bit muddy. Most network consultants tend to dabble in reselling. Hence this relationship behaves like the traditional channel where consultants and VARs represent specific manufactures, and mark up equipment to make margins. Customers benefit because the true cost of the consulting, to design and deploy their networks, is subsidized by the margins the VARs make on their equipment sales.

2) On the other hand, companies and institutions with in house IT staffs are starting to get away from the traditional equipment reseller. They are more likely to do their research on line, and are more than willing to buy outside of a traditional channel. This creates a strange double edged sword for OEMs, as they are heavily dependent on the relationships of their channel partners to move equipment. For the same reason that those factory outlet stores are located outside of town, OEMs do not want to shoot themselves in the foot by selling direct and competing with their resellers.

Even though there is some degradation in the traditional channel, I don’t think we will see its demise any time soon for a couple of reasons.

1) Network solutions remain labor intensive, and expertise will always be at a minimum. Even with cloud based computing there is still a good bit of infrastructure required at the enterprise and this bodes well for the VARs and reseller who offer their expertise while acting as the conduit to move equipment with mark-up from the OEMs

2) Network equipment itself resists becoming a commodity. Yes home routers and such have gone that route, but with advanced features such as bandwidth optimization and security driving the market , network equipment remains complex enough to justify the value added channel.

What are you seeing?

Related Article: Us channel sales flat for third straight year.

Share this: