By Art Reisman, CTO, APconnections makers of NetEqualizer Internet Optimization Equipment

Outsourcing Communications with a Virtual PBX

CTO http://www.apconnections.net http://www.netequalizer.com

A new breed of applications emerging from the intersection of VoIP and broadband may soon make the traditional premise-based PBX a thing of the past. Virtual PBX, hosted and delivered by today’s telcos and cable operators, is quickly becoming an option for businesses looking to outsource portions of their communications network. Rather than purchase and maintain an expensive piece of equipment, you can now sign up for a pay-as-you-go service with all of the functionality of an on-site PBX but with none of the expense.

To some, this idea may sound like a return to the past and, in a sense, it is. AT&T began delivering PBX functionality through its Centrex services in the 1970s. However, upon closer investigation, it is clear that the functionality delivered and the economics of the two approaches are very different.

The Private Branch Exchange: A Brief Primer

A PBX or private branch exchange allows an organization to maintain a small number of outside lines when compared to the number of actual telephones and users within an organization. Users of the PBX share these outside lines for making telephone calls outside the organization (external to the PBX).

Onsite PBX became popular and matured in the 1980s when the cost of remote connectivity was extremely high and the customer control of hosted PBX-like services of the time (Centrex) was limited, if it was even offered. In 1980, providing advanced, remote PBX services to a building with 100 employees would have required AT&T to run 100 individual copper lines from the local exchange to each telephone at the site.

As more and more businesses opted to install a PBX onsite, competition for customer dollars drove ever more extensive “business-class” features into these devices, further differentiating the premise-based PBX from the hosted products offered by telephone companies. Over time, PBX offerings gradually standardized into the product set that today we have come to expect when we pick up any business phone: voice-mail, auto attendant, call queuing, conferencing, call transfer, and more.

Flash forward from 1980 to 2005. Today, 100 direct phone lines can be transported from one location to another over many miles with no more than one wire. Remote access to control a PBX outside of your building is also trivial to implement with a simple Web portal. Technological advances coupled with feature stability and the broad appeal of PBX “applications” makes them a prime candidate for hosting.

A business starting today can have a full-featured hosted PBX with a single high-speed Internet connection. These virtualized services would require no additional equipment to purchase or maintain.

Defining Virtual PBX

Businesses looking to purchase such a service today can expect to find significant differences in the features and functionality available among offerings being marketed under the, often interchangeable, terms hosted or virtual PBX. To alleviate confusion and provide a starting point in your quest to outsource your communications network, the perfect, hosted PBX service would have the following features:

Auto-detectionThe PBX must dynamically detect remote stations from any place in the world and provide dial tone (As opposed to having a user dial in to obtain service. See the sidebar, Start with a Dial Tone).

Start with a Dial Tone

There are products on the market that remotely host a set of PBX services and require the user to dial in with a standard phone so the PBX can identify the caller. This is a viable approach to providing a hosted PBX with established stability. However, it does have a few restrictions not applicable to a pure hosted PBX.

When using the PBX services, the caller ties up a local phone line and blocks calls directly made to that line.

Obtaining a dial tone for an outbound call can only be done by first connecting to the PBX, or as a final alternative just using the standard phone line to dial out without going through the PBX, which takes away all of the cost and convenience benefits of the PBX.

A truly hosted PBX solution must provide a dial tone without first dialing in.

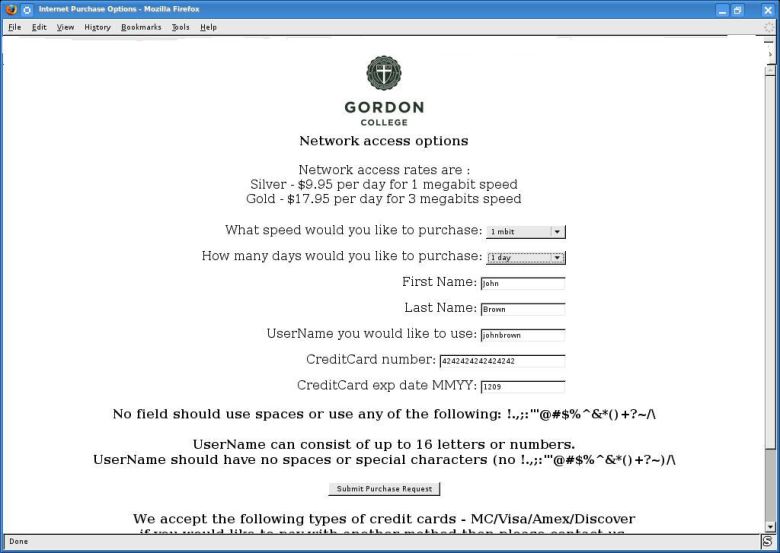

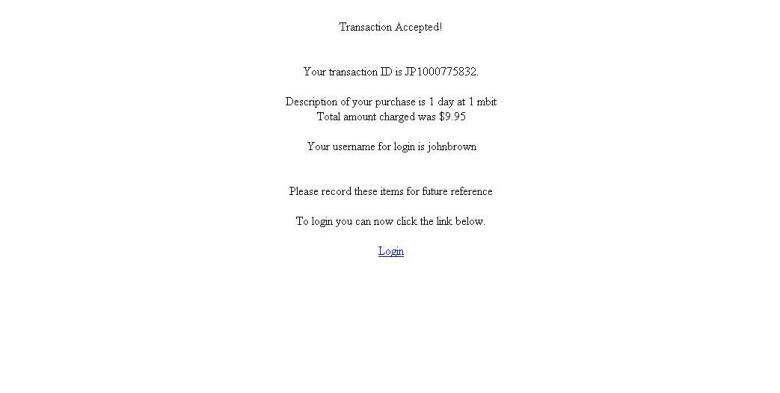

Service Provisioning New service provisioning must be self-service with no expensive customer premise equipment required. For example, a customer with a credit card and access to a provider’s Web page should be able to initiate worldwide service in a matter of minutes.

Standards Support Off-the-shelf SIP phones must be supported by the hosted service. A virtual PBX should not lock customers into using specific equipment or proprietary protocols.

Affordable Start-up costs should be minimal and usage-based, allowing a small business to seamlessly grow and add stations as needed, without ever needing a disruptive upgrade or requiring a large capital investment.

Level Rates Outbound and inbound toll rates should be provided at wholesale prices globally by the service provider. The customer can be assured of one published competitive price for outgoing calls and incoming calls.

Administration Each business using the service should have access to a private portal allowing them to administer features and options. The organization’s account and services should be secure and accessible to a designated administrator 24/7.

Bundled Applications The service must offer a minimum set of applications common to an onsite PBX. The most common of which include: transfer, conference, forward, find me, follow me, voice mail, auto attendant, basic call reporting, and inbound and outbound caller ID.

Technology Considerations

While the benefits to a hosted PBX solution are immediately obvious–elimination of equipment hard costs and the specialized knowledge required to keep it up and running–there are drawbacks to consider when adopting an emerging technology.

The first point to consider is that the technology behind hosted PBX services has not yet developed to the point of large-scale enterprise deployments. Currently, the organizations that will see the most benefit from a hosted solution are small- to medium-sized businesses.

Quality of service, the shadow that follows every voice over IP application, is the overriding technology hurdle that consumers need to be aware of when considering a hosted PBX solution. Latency can also be an issue; the different routes that IP data takes across the Internet can cause speech breaks and dropped calls.

QoS and latency are key considerations when discussing bandwidth requirements and network architecture with potential vendors. Being undersold on bandwidth when moving to an IP communications network can create problems above and beyond being oversold.

Selecting a Vendor

The low barrier to entry for vendors looking to offer hosted PBX services has created a number of options for consumers and driven down costs, but customers need to be aware that not all service providers are equal.

Existing Infrastructure Deploying a world-wide hosted PBX service as outlined above requires a significant infrastructure investment to handle the centralized switching needed to move millions of simultaneous call around the world. When investigating service providers, look for a vendor that has the knowledge to grow not only with your business but also with the broad adoption of the technology as a whole. Having a tested, existing infrastructure in place for business-class communications is key.

Service Provider Network One method of alleviating IP voice quality issues on a regional basis is by staying within a large service provider network. For example, if an organization uses a Qwest T3 trunk service at its headquarters and an employee travels to neighboring cities with Qwest DSL service in their hotels, it is unlikely that quality problems will be experienced at the carrier level. Choosing a vendor that understands how your organization will use the service should be an important part of your selection process.

Conclusion

While adoption is not yet widespread, hosted services are here and will only get better with time. As companies continue to seek the benefits of outsourcing the elements of their enterprise–from business processes to core technologies—adoption will continue to grow, making hosted PBX is a technology to keep your eye on in 2005.

Note the author uses a solution from Aptela and has found their support to be top notch and was the main reason for switching about 4 years ago.

![]()

Four Reasons Why Peer-to-Peer File Sharing Is Declining in 2009

February 3, 2009 — netequalizerBy Art Reisman

CTO of APconnections, makers of the plug-and-play bandwidth control and traffic shaping appliance NetEqualizer

I recently returned from a regional NetEqualizer tech seminar with attendees from Western Michigan University, Eastern Michigan University and a few regional ISPs. While having a live look at Eastern Michigan’s p2p footprint, I remarked that it was way down from what we had been seeing in 2007 and 2008. The consensus from everybody in the room was that p2p usage is waning. Obviously this is not a wide data base to draw a conclusion from, but we have seen the same trend at many of our customer installs (3 or 4 a week), so I don’t think it is a fluke. It is kind of ironic, with all the controversy around Net Neutrality and Bit-torrent blocking, that the problem seems to be taking care of itself.

So, what are the reasons behind the decline? In our opinion, there are several reasons:

1) Legal Itunes and other Mp3 downloads are the norm now. They are reasonably priced and well marketed. These downloads still take up bandwidth on the network, but do not clog access points with connections like torrents do.

2) Most music aficionados are well stocked with the classics (bootleg or not) by now and are only grabbing new tracks legally as they come out. The days of downloading an entire collection of music at once seem to be over. Fans have their foundation of digital music and are simply adding to it rather than building it up from nothing as they were several years ago.

3) The RIAA enforcement got its message out there. This, coupled with reason #1 above, pushed users to go legal.

4) Legal, free and unlimited. YouTube videos are more fun than slow music downloads and they’re free and legal. Plus, with the popularity of YouTube, more and more television networks have caught on and are putting their programs online.

Despite the decrease in p2p file sharing, ISPs are still experiencing more pressure on their networks than ever from Internet congestion. YouTube and NetFlix are more than capable of filling in the void left by waning Bit-torrents. So, don’t expect the controversy over traffic shaping and the use of bandwidth controllers to go away just yet.

Share this: